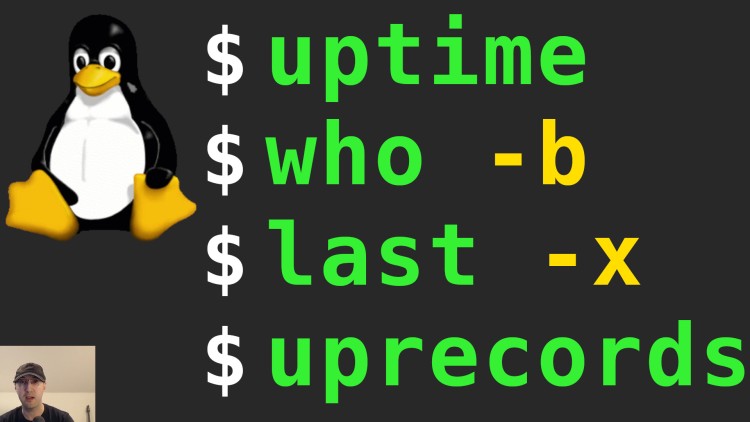

Check When a Server Was Shutdown or Reboot with uptime, who and last

This can help detect how long a server has been up or when you may have powered it off. We'll track reboots too.

Here’s a couple of questions we’ll answer by using a few different tools:

- How long has a machine been up?

- When was a machine shutdown or powered down?

- When was a machine rebooted?

- How to track the uptime of each reboot and total downtime for a machine’s lifetime?

We’ll be using built in tools such as uptime, who, w and last as well

as a 3rd party tool call uprecords which is available in most package

managers as uptimed.

# Commands

Let’s start answering the above questions.

Using the uptime Command

Here’s a couple of different ways to use this command to find how long a box has been up:

$ uptime

23:40:58 up 981 days, 8:21, 1 user, load average: 0.00, 0.00, 0.00

$ uptime --since

2020-08-13 15:19:32

$ uptime --pretty

up 2 years, 36 weeks, 1 day, 8 hours, 21 minutes

The command without flags is pretty handy. You can see how long it’s been up, how many users are logged in and a snapshot of the system’s load.

The --since|-s flag is nice to only return back the date and time of when the

box came up. This could be after you rebooted or powered it on.

The --pretty|-p flag gives a human readable relative time of --since.

Using the who and w Commands

We’ll answer the question of when the box was last booted up (similar to

uptime):

$ who --boot

system boot 2020-08-13 15:19

You should get the same output as uptime --since. I tend to use uptime

instead of who if I want know the uptime since uptime as a command name feels

more descriptive.

But you can use who --all|-a to get additional information:

$ who --all

system boot 2020-08-13 15:19

run-level 5 2020-08-13 15:19

LOGIN ttyS0 2020-08-13 15:19 761 id=tyS0

LOGIN tty1 2020-08-13 15:19 771 id=tty1

nick + pts/0 2023-04-21 23:40 . 1026880 (XX.XX.XX.XX)

pts/1 2021-10-30 23:52 3338628 id=ts/1 term=0 exit=0

pts/2 2021-07-25 18:50 1626320 id=ts/2 term=0 exit=0

pts/3 2021-07-24 23:59 1609853 id=ts/3 term=0 exit=0

That shows things like the boot time, dead processes, login processes,

processes spawn by your init system, runlevel, the last time your system clock

changed and a user’s message status. The XX.XX.XX.XX would be a user’s IP

address that they’re connecting from.

In the above case I was SSH’d into a server as the nick user.

There’s also the w command which will show the uptime along with who’s logged

in:

$ w

23:41:27 up 981 days, 8:22, 1 user, load average: 0.00, 0.05, 0.02

USER TTY FROM LOGIN@ IDLE JCPU PCPU WHAT

nick pts/0 X.X.X.X 00:05 2.00s 0.06s 0.00s w

This can be handy to see who’s connected to a machine in case you’re planning

to reboot. You can run man w to learn more details about each column.

Using the last Command

This will help us know when a server was reboot or shutdown over time. This can be handy to get not just the uptime or last reboot time. You may want to see a number of reboot or shutdown events:

$ last --system

nick pts/0 192.168.1.2 Sat Apr 22 12:52 still logged in

nick pts/0 192.168.1.2 Sat Apr 22 10:25 - 10:26 (00:00)

runlevel (to lvl 5) 5.15.0-70-generi Sat Apr 22 10:25 still running

reboot system boot 5.15.0-70-generi Sat Apr 22 10:24 still running

shutdown system down 5.15.0-70-generi Sat Apr 22 10:24 - 10:24 (00:00)

runlevel (to lvl 5) 5.15.0-70-generi Sat Apr 22 10:23 - 10:24 (00:00)

nick pts/0 192.168.1.2 Sat Apr 22 10:23 - 10:24 (00:00)

reboot system boot 5.15.0-70-generi Sat Apr 22 10:23 - 10:24 (00:01)

shutdown system down 5.15.0-70-generi Sat Apr 22 10:21 - 10:23 (00:01)

runlevel (to lvl 5) 5.15.0-70-generi Sat Apr 22 10:20 - 10:21 (00:00)

nick pts/0 192.168.1.2 Sat Apr 22 10:20 - 10:21 (00:00)

reboot system boot 5.15.0-70-generi Sat Apr 22 10:20 - 10:21 (00:00)

shutdown system down 5.15.0-70-generi Sat Apr 22 10:20 - 10:20 (00:00)

nick pts/0 192.168.1.2 Sat Apr 22 10:19 - 10:20 (00:00)

nick pts/0 192.168.1.2 Sat Apr 22 00:17 - 00:17 (00:00)

nick pts/0 192.168.1.2 Sat Apr 22 00:10 - 00:11 (00:00)

wtmp begins Sat Apr 22 00:10:49 2023

If the above is too noisy on your machine you can get specific information such

as running last --system|-x reboot or last --system|-x shutdown.

You will likely see different results than the uptime or who command

because the last command reads from your /var/log/wtmp logs to get these

values and those logs are often rotated, at least on Debian and Ubuntu which is

what I use.

In the above case you can see the logs were rotated on the same day I ran the command based on the last line letting us know the time the logs begin. That makes this command not very reliable to detect the history of when a box was reboot or shutdown.

You may only get let’s say 1 day or month’s worth of stats before the logs get rotated.

We’ll go over another tool we can use to get an accurate list but first let’s go over 1 use case where this command might still be handy.

For example, one of my clients had a cloud server they created 6 years ago. It was stopped some time ago in the past but not deleted. Before deleting it we wanted to know when the server was stopped to help figure out when the server was last used. We knew the creation time of the system from the cloud provider’s stats but that was it.

The shell history didn’t show any shutdown commands such as sudo shutdown now with a timestamp because the machine was powered off using the cloud

provider’s web UI.

I was able to run last -x shutdown to get when the server was powered down.

Since the server was powered down the whole time no logs were able to get rotated so when I turned on the machine I ran the command and saw a date from a few years ago. Everything line up because that date aligned exactly with the creation time of a new server they replaced it with. It gave us a higher confidence rating that the server was ok to delete.

Installing uptimed and Using the uprecords Command

This will help us track reboots across a system’s lifetime.

First, let’s look at its output. Keep in mind these stats do not line up with

the stats of the previous commands we’ve seen so far in this post. I only

started using uprecords recently.

uprecords will only show and track stats after installing the tool. It will

not be able to get a bunch of accurate stats prior to when it was installed

because those stats may not exist due to being rotated away. It’ll start

tracking stats since your current uptime after you install it.

I took these stats from a fairly new VM I set up and rebooted a few times:

$ uprecords

# Uptime | System Boot up

----------------------------+---------------------------------------------------

1 0 days, 20:24:42 | Linux 5.15.0-70-generic Fri Apr 21 13:55:18 2023

2 0 days, 02:32:05 | Linux 5.15.0-70-generic Sat Apr 22 10:24:29 2023

-> 3 0 days, 00:01:37 | Linux 5.15.0-70-generic Sat Apr 22 12:56:46 2023

----------------------------+---------------------------------------------------

1up in 0 days, 02:30:29 | at Sat Apr 22 15:28:51 2023

no1 in 0 days, 20:23:06 | at Sun Apr 23 09:21:28 2023

up 0 days, 22:58:24 | since Fri Apr 21 13:55:18 2023

down 0 days, 00:04:41 | since Fri Apr 21 13:55:18 2023

%up 99.661 | since Fri Apr 21 13:55:18 2023

- By default it lists uptimes ordered by the longest uptime first

->is the row associated with your current bootup/downnear the bottom is cumulative amounts sinceuptimedhas been installed- In my case the VM was down for 4 minutes when I shut it down for a bit

I think it calculates total downtime by subtracting the time difference. For

example if a box came up at 12:00:00 and was up until 12:10:00 it knows

the uptime has been 10 minutes. If the box gets booted up again at 12:15:00

then it can calculate a 5 minute gap between when it was shutdown and booted

up, so that’s categorized as downtime.

One if my sysadmin friends turned me onto using this tool. Here’s more real-world stats from his development box. It includes his 10 longest uptimes:

$ uprecords

# Uptime | System Boot up

----------------------------+---------------------------------------------------

1 61 days, 07:05:42 | Linux 5.10.0-19-amd64 Mon Nov 21 08:34:55 2022

-> 2 45 days, 23:53:14 | Linux 5.10.0-19-amd64 Mon Mar 6 22:20:47 2023

3 30 days, 19:00:50 | Linux 5.10.0-15-amd64 Thu Jul 28 17:23:05 2022

4 27 days, 07:37:19 | Linux 4.19.0-8-amd64 Sat Jun 20 13:50:48 2020

5 24 days, 10:00:47 | Linux 5.10.0-19-amd64 Tue Jan 24 14:36:32 2023

6 24 days, 09:49:57 | Linux 5.10.0-15-amd64 Mon Oct 10 09:41:13 2022

7 18 days, 00:01:00 | Linux 5.10.0-15-amd64 Thu Sep 22 08:26:27 2022

8 16 days, 19:47:05 | Linux 5.10.0-15-amd64 Sun Aug 28 13:03:24 2022

9 15 days, 16:00:51 | Linux 5.10.0-19-amd64 Sat Feb 18 18:44:24 2023

10 14 days, 09:38:45 | Linux 4.19.0-6-amd64 Mon Dec 23 14:49:05 2019

----------------------------+---------------------------------------------------

no1 in 15 days, 07:12:29 | at Sun May 7 06:26:30 2023

up 698 days, 09:23:57 | since Sat Jul 20 09:57:23 2019

down 673 days, 03:52:41 | since Sat Jul 20 09:57:23 2019

%up 50.920 | since Sat Jul 20 09:57:23 2019

An interesting takeaway here is there’s almost exactly the same amount of uptime as there is downtime. That’s because he used to turn his machine off every night when it was in the same as room as him and he had other downtime mixed in.

His living conditions changed recently where now he keeps it up 24 / 7 and he averages ~20 days of uptime to ensure security patches are up to date and sometimes he has small power outages, but there were older records that looked like this:

497 0 days, 15:23:48 | Linux 4.19.0-8-amd64 Sun Oct 4 09:43:08 2020

498 0 days, 15:23:24 | Linux 4.19.0-14-amd64 Sun May 9 09:12:23 2021

499 0 days, 15:23:16 | Linux 4.19.0-19-amd64 Sun Mar 27 07:42:52 2022

500 0 days, 15:23:05 | Linux 4.19.0-14-amd64 Thu Oct 14 08:24:35 2021

Here we can see he kept it powered on for ~15 hours at a time. That’s 9 hours of downtime per day on average from years ago. By the way, storing ~500 boot logs (uncompressed) over ~4 years is only about 40kb of disk space.

Installing and configuring uptimed:

You can install it on Debian based distros with sudo apt-get install uptimed.

You should be able to find it in other distros with the same package name,

including Brew on macOS. If not you can Google around to find what it might be

named elsewhere.

There is a config file in /etc/uptimed.conf. On Debian based systems I’ve

noticed LOG_MAXIMUM_ENTRIES defaults to 500 despite the default config being

set to 50 on

GitHub.

That will log your longest 500 uptimes with no custom configuration.

Personally when I roll with native Linux on my dev box I’ll configure this to

0 for unlimited since the disk space cost is minimal and I want lifetime

stats without thinking about it. Storing ~500 boot logs (uncompressed) is only

about 40kb of disk space. I tend to avoid unlimited logging but realistically

this feels safe.

One thing to consider is a server as opposed to your personal dev box which will very likely never be rebooted 500 times. Especially cloud servers where you might create a new box to upgrade major distros which means they tend to get recreated every few years. You may never reboot your box even once between that time frame!

Even in the worst case scenario, let’s say you didn’t recreate your server and there’s on average 10 critically important minor releases for a distro per major distro that requires a reboot. Let’s also say your distro releases major versions about every 2 years too.

If you have to reboot to apply those patches that’s 10 reboots per 2 years. To reach 500 reboots would take 100 years of time. That’s probably not going to happen so the default value should be safe on a server.

Other than that I’ve left everything else at their default values. Another note

worthy option is LOG_MINIMUM_UPTIME=1h. That means by default it will not

record uptimes less than this value. If you want more detail you may want to

consider lowering that to 1m (1 minute) or whatever your threshold is.

A couple of flags:

-bsort by boot up date instead of uptime (newest on bottom)-Bsame as-bbut in reverse (newest on top)-m <COUNT>to show X number of entries instead of 10 by default

You can run uprecords --help to see what else is available.

The video below demonstrates running most of the commands above and more.

# Demo Video

Timestamps

- 0:29 – Using the uptime command

- 1:49 – Using the who command

- 3:02 – Using the last command

- 4:09 – Narrowing down last with shutdowns or reboots

- 4:37 – I used the last command to help solve a real world problem

- 6:43 – Tracking uptime and downtime for the lifetime of your box

- 7:32 – Looking at the default output of uprecords

- 8:55 – It can calculate total downtime through deduction

- 10:07 – Going over a few uprecords flags for sorting

- 11:13 – Taking a look at a few year old machine using uprecords

- 12:25 – Showing more than 10 records with uprecords

What’s your preferred way to track uptime? Let me know below!