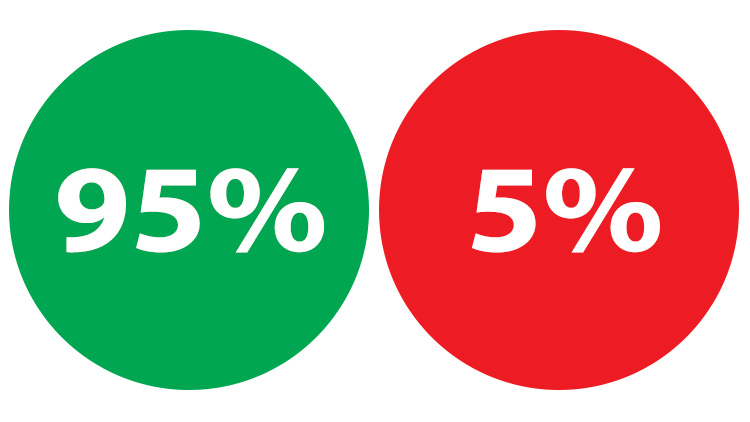

Optimize Your Programming Decisions for the 95%, Not the 5%

Many years ago I used to optimize a lot of my programming decisions for 'what if' conditions or the 5%. That was a mistake.

A few weeks ago I came across an interesting post title on HackerNews which was

"Why I wrote 33 VSCode extensions and how I manage them".

That title really grabbed my attention, so I did what most of us do which is head straight to the comments before reading the article.

That’s where I discovered this comment:

My problem with adding plugins or extending my environment much past the default is that eventually I have to deal with a co-worker’s non-extended default installation. I end up relying too much on the add-ins.

Reading that really hit home for me, because that’s how I used to think a long time ago.

But then I came across this comment:

I strongly dislike the reasoning that suggests you should hamstring yourself 100% of the time to accommodate a potential situation that may affect you 5% of the time.

“I don’t use multiple monitors because sometimes I’m just with a laptop”.

“I don’t customize my shell because sometimes I have to ssh to a server”

“I don’t customize my editor because sometimes I have to use a coworkers editor”.

And here we are because I think this is a really underrated topic.

# “What if” Conditions

Many years ago I remember avoiding to use Bash aliases because you know, what if I ssh into a server, it might not have those aliases and then I’m done for!

I was optimizing my development environment for the 5% and all it did was set things up to be a constant struggle.

The crazy thing is, back then it made a lot of sense in my mind. It’s very easy to talk yourself into agreeing with some of the quotes listed above and many more.

But optimizing for the 5% is an example of optimizing for the “what if” scenario.

You do everything in your power to make sure what you’re doing is generic enough to work everywhere, but what you’re really doing is making things harder for yourself in the 95% case, but the 95% is what matters most.

It Affects the Code You Write

This isn’t related to only development environment decisions either. This is something that affects the code you write.

If you try to write something to be fully generic from the beginning because “what if I make another application and it needs to register users?” then you typically make your initial implementation a lot worse.

Without having a deep understanding of what you’re developing and have put in the time to come up with good abstractions based on real experience, you’re just shooting in the dark hoping your generic user system works for all cases when you haven’t even programmed it yet for 1 use case. How is that even possible to do?

It Affects How You Architect Your Applications

When you blindly follow what Google and other massive companies are doing, you’re optimizing for the 5% in a slightly different way.

Instead of just getting your app up and running and seeing how it goes, you try to make decisions so that your application can be developed by 100 different teams sprawling across 5,000 developers.

Meanwhile it’s just you developing the app by yourself in nearly all cases for new projects.

It Affects How You Deploy Your Applications

When you try to optimize your deployment strategy to handle a billion requests a second from day 1, you’re just setting yourself up for an endless loop of theory based research.

It often includes spending months looking at things like how to set up a mysterious and perfect auto-healing, auto-scaling, multi-datacenter Kubernetes cluster, but it leads to no where because these solutions aren’t generic enough to work for all cases without a lot of app-specific details.

Do you ever wonder why Google, Netflix, GitHub, etc. only give bits and pieces of information about their deployment infrastructure? It’s because it optimizes their chances of having better tools for them specifically.

What better way to get people interested and working on their open source projects than to make these tools look as attractive as possible and then back it up with “we’re using this to serve 20 billion page views a month so you know it works!”.

It’s a compelling story for sure, but it’s never as simple as just plugging in 1 tool like Kubernetes and having a perfect cluster that works in a way that you envision it all should work in your head when it comes to your app.

It’s easy to look at a demo based on a toy example and see it work but all that does is make the tool look like paradise from the outside. It’s not the full story.

As soon as you start trying to make it work for a real application, or more specifically, your application, it all falls apart until you spend the time and really learn what it takes to scale an application (which is more than just picking tools).

The companies that created these tools have put in the time over the years and have that knowledge, but that knowledge is specific to their application.

They might leverage specific tools that make the process easier and tools like Kubernetes absolutely have value, but the tools aren’t the full story.

What if putting your app on a single $40 / month DigitalOcean server allowed for you to have zero downtime deploys and handle 2 million page views a month with tens of thousands of people using your app, without breaking a sweat – all without Kubernetes or trying to flip your entire app architecture upside down to use “Serverless” technologies?

I Used to Do All of the Above Too

I’ve been saying “you” a lot in this post but I’m not targeting you specifically or talking down to the programming community as a whole.

I’ve done similar things to everything that was written above but with different tools and different decisions because technologies have changed over time.

I can distinctly remember when all of this switched in my head too. It was when Node first came out about 8’ish years ago.

I remember being fairly happy using PHP, writing apps, shipping apps, freelancing, etc.. But then I watched Ryan Dhal’s talk on Node (he created Node) and I started to drink the kool-aid for about 6 months straight.

Thoughts like “Holy shit, event loops!”, “OMG web scale!”, “1 language for the back-end and front-end? Shut up and take my money.” were now buzzing through my head around the clock.

So all I did was read about Node and barely wrote any code, until eventually I started writing code and while I learned quite a bit about programming patterns and generally improved as a developer, I realized none of the Node bits mattered.

And that’s mainly because back-end and front-end development is always going to have a context switch, even if you use the same language for both, and lots of languages have solutions for helping with concurrency.

Those 6ish months were some of the most unproductive and unhappy days of my entire development life. Not because Javascript sucks that hard, but because when you’re on the outside and not doing anything and wondering “what if”, it really takes a toll on you.

I’m still thankful I went through that phase because it really opened my eyes and drastically changed how I thought about everything – even outside of programming.

Premature Optimization Is the Root of All Evil

Donald Knuth said it best in 1974 when he wrote:

Premature optimization is the root of all evil.

Optimizing for the 5% is a type of premature optimization. Maybe not so much for your development environment choices, but certainly for the other cases.

Base your decisions on optimizing for the 95%, keep it simple and see how it goes. In other words, optimize when you really need to not because of “what if”.

What are some cases where you optimized for the 5%? Let me know below.