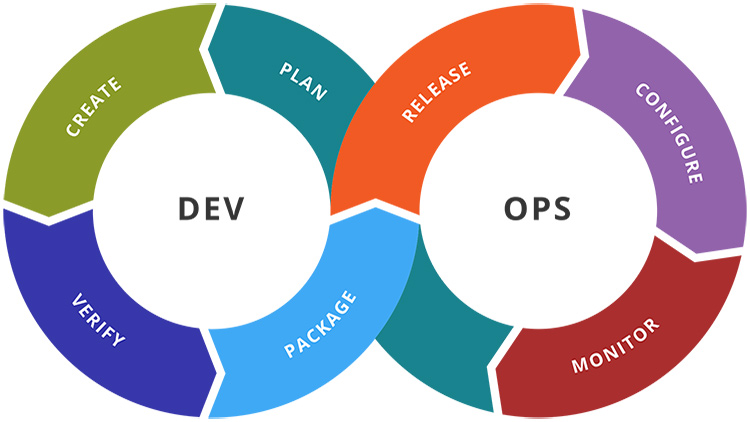

What Is DevOps?

Someone recently asked me to define DevOps. This is one of those terms where everyone has a slightly different definition.

When I think of “DevOps”, the first thing that comes to mind is taking on the responsibility of not only developing your own applications, but also deploying them.

Keep in mind, that’s coming from a freelancer’s POV who isn’t working at a large organization. My definition sounds like it’s something scary for sysadmins or operations people because developers are now arming themselves with knowledge to eliminate you out of a job.

But that’s not the case. Both “employment roles” are still distinct. In larger organizations, you might have both developers and operations working together on the same team.

Instead of throwing code over a wall as a developer, you’ll get a taste of operations because your ops team mate will be communicating with you regularly. Operations will also get a taste of development which will give them a better understanding on how to roll out the application to production. Everyone wins and everyone has their own role / speciality / pay check.

The overall goal of “DevOps” is to create and deploy applications faster and more reliably. There’s many degrees of DevOps and it’s not an all or nothing thing.

To me, it’s a combination of being a mindset shift and a bunch of implementation details.

# What Type of Developer Are You?

I’m all about the solo developer experience. I think it’s awesome that we live in a world where a single person can come up with an idea and then execute it from start to finish.

This is everything from developing the application to releasing it to the world. It even includes non-developer oriented topics too such as marketing, copywriting and all of that jazz.

That’s just me personally, but let’s limit this discussion to developer only topics.

On one hand, you have developers who are only interested in writing code. They only care about opening their code editor, writing app features and giving someone else the task of figuring out how to make it run in production.

Running in production could mean a bunch of different things depending on what you’re developing, but let’s talk about this in the context of web application development where production means having the site running on a public domain name.

There’s nothing wrong with this type of developer, so if you’re one of these developers and want to keep it that way, I’m certainly not attacking or making fun of you.

On the other hand, you have developers who want to do the above, but also deeply care about how their applications are ran in production.

Suddenly, you need to take off your app developer hat and put on a new hat. Now you’re thinking about things like “I wonder how much resources my app uses because that will determine what server I’ll need to rent or buy”.

Which Developer Is Better?

Both developers can be super talented at what they do, and both developers can be highly successful in their fields. Heck, both developers can even build and release applications on their own because services exist to help offload some of the operation related tasks, but…

I believe that someone who deeply thinks about the entire life cycle of their application will become better at developing applications because they know the whole story.

They will write better code because they’ll know the limitations of what’s possible, be aware of certain performance related pitfalls that don’t spring up in development, and ultimately they know how all of the pieces of the system connect.

Also, someone who knows the full story will be able to trouble shoot real life issues in production faster. They will know what to look for in log files, how to fix it and their spidey sense will tingle when it comes to predicting performance issues.

For example, a developer who cares very little about deploying their own code might say this to an ops person: “It runs fine on my machine, stop bothering me with your problems, it’s not my fault that the server crumbles under load”.

Meanwhile, that developer unleashed an N+1 query that resulted in 875 SQL queries being ran on a heavily trafficked page, and to make matters worse, that developer also thinks “cache” is some hipster way of spelling “cash”.

Oh yeah, they also have an 8 CPU core development box with 16GB of RAM but the server only has 2 CPU cores with 2GB of RAM. In development mode, zero thought is given to memory usage and performance. That’s a very dangerous mindset.

# Implementing DevOps in Real Life

As mentioned previously, DevOps isn’t an all or nothing thing. You can choose how far you want to go down the DevOps rabbit hole.

If you’re a 1 person army, you won’t be able to avoid it completely.

Even if you use a service like Heroku, you still need to think about things like how much resources you need for both your application and its database(s).

Heroku makes it pretty easy to get up and running, but it comes at a major price. With Heroku you could end up paying over 10x the cost vs rolling your own set up.

The upside is you can avoid having to learn some critical operations related skills, so if it gets you off the ground faster, it might be reasonable in some cases. It depends on your goals.

I don’t want this article to turn into a Heroku vs XYZ comparison, but my point is Heroku is at the “easier but much more expensive” side of the spectrum.

On the other side of the spectrum, you would be responsible for figuring out which cloud hosting provider to use and even what distribution of Linux you should run.

There’s no end to what you could learn. Docker, Ansible, Continuous Integration, Linux server security and best practices are all on the table to learn when rolling your own set up. You could choose to not use some of those tools too. It’s up to you. There is no 1 right answer.

The upside is you’ll fully know your stack from top to bottom and it’s way cheaper than Heroku. Of course, the downside is you’ll need to figure out things as you go and it may delay your launch if you’re not already experienced.

Which Route Is Best?

There is no best answer, but personally I prefer rolling my own set up and deploying applications to 1 server in most cases.

I find that too many people get hung up on details that don’t really matter, like trying to copy exactly how Facebook or Google deploy web applications. Chances are you won’t be serving billions of page views a day from the beginning while dealing with 20,000 developers.

Deploy strategies are no different than coding. There’s an evolution to what you come up with.

For example, you don’t just start off from day 1 with super nice looking code. You start off with code that works, which may have poor abstractions and other issues, but at the end of the day it works.

Then as the project matures, you find patterns, and now making clean abstractions are really obvious because you’ve hit the pain points first hand.

Deploying apps is the same way. I highly recommend deploying to 1 server using best practices and using tools to help you automate the process. Then, if you hit the big time and need to scale to tens of millions of users you can consider moving to multiple servers.

You can still create really clean and awesome solutions for deploying to 1 server. You can also design your applications in such a way that running them on 1 or 100 servers is no different. This isn’t tied to a specific web framework either.

So, that’s my take on DevOps and how it applies to a software developer.

What DevOps related tools and strategies are you using? Let me know below.